Welcome to The Next Rex

Transforming Visions into Reality

Your Gateway to IT and Engineering excellence.

Find out more about

Who we are

Services & solutions

Our projects

Design & engineering

Our expertise

Companies we worked with

Schedule an Appointment Today

Schedule an Appointment Today and embark on a journey towards a sustainable future with Renewable Energy Realization.

Building the future

Our Expertise

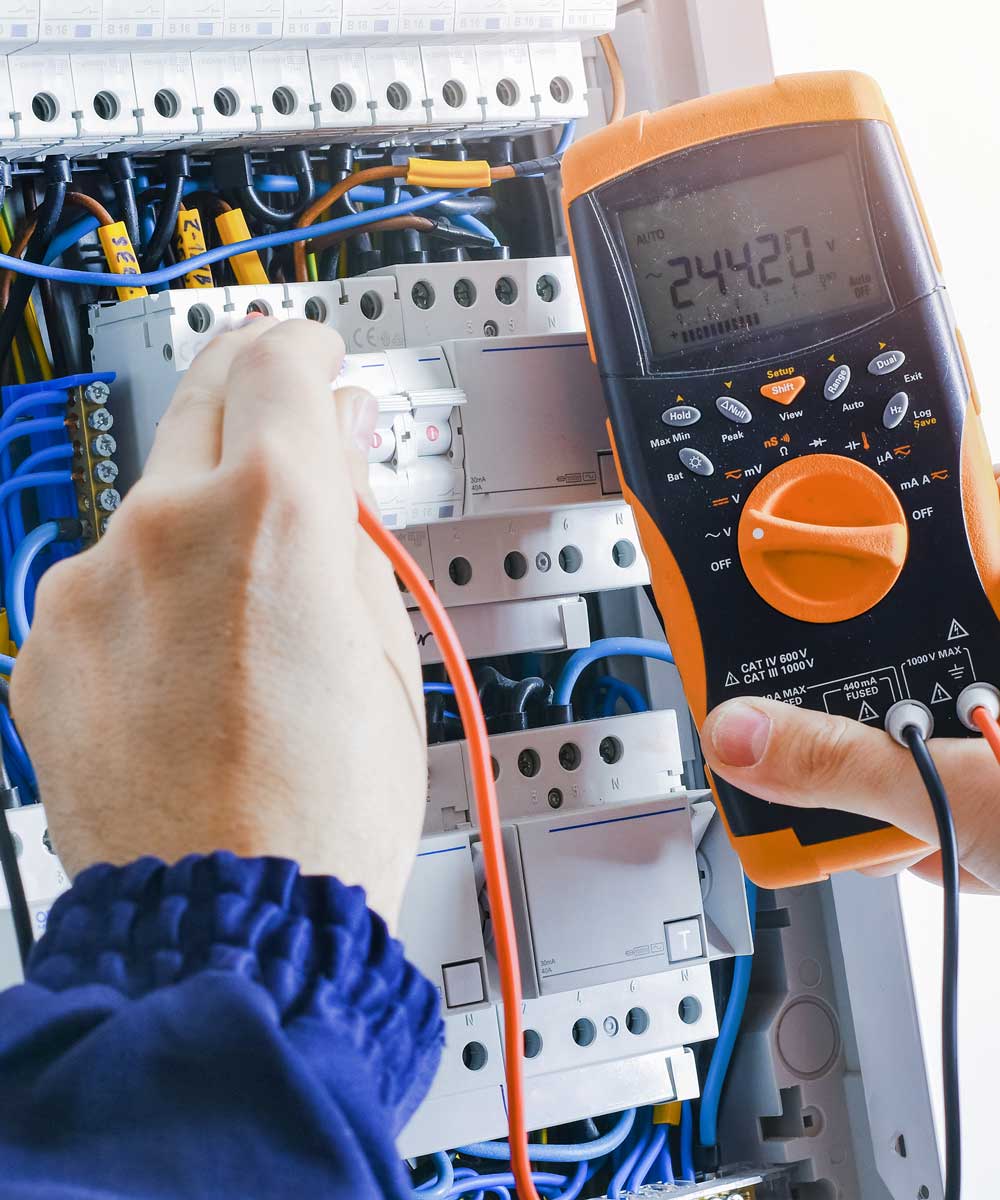

Engineering Consultancy

At The Next Rex, we’re dedicated to shaping the future through innovative engineering solutions. Our passion for excellence and commitment to quality drive every project we undertake.

IT Consultancy

At The Next Rex, we’re on a mission to supercharge your online presence. Our digital marketing services are designed to help businesses like yours thrive in the digital landscape.

Engineering & IT Secondments

We understand the challenges of securing skilled resources for your projects without long-term commitments. That’s where we step in to provide the support you need.

Building the future

Renewable Energy Realization

Building the future

Create. Enhance. and Sustain

We embody a commitment to creation, enhancement, and sustainability. Our passion lies in transforming ideas into tangible solutions that fuel innovation and pave the way for a sustainable future.

Building the future

Building The Future With Ideas

Innovation is the cornerstone of progress. We embark on a journey to turn imaginative ideas into reality, constructing a future marked by creativity, growth, and sustainable solutions.

Building the future

Excellence And Innovation Built Into Every Design

Every design we craft is a testament to excellence and innovation. Our unwavering dedication ensures that each solution we deliver surpasses expectations, setting new standards in the industry.

%

Economic

Growth

Prosperity through innovation and investment.

%

Average

Rating

Consistently earning top-notch client reviews.

%

Reduced

Emissions

Pioneering eco-friendly, emissions-reducing solutions.

Building the future

Designing Future With Excellence

Crafting tomorrow with excellence. Our innovative, quality-driven approach ensures your vision becomes a reality. Contact us to transform your future.

Building the future

Our Team

Ali Rao

CEO

Sheetal Mehta

Project Manager

Alex Morgan

Digital Marketer

Building the future

Renewable Energy Realization

Unlocking the potential of renewable energy sources through innovation and sustainable solutions. Empowering a greener, more sustainable future.